Updated on April 16, 2026 • 7 min read

The Ultimate Guide To AI Interoperability

CX Analyst & Thought Leader

April 16, 2026

AI interoperability is quickly becoming the foundation of enterprise AI strategies, connecting all your existing, multi-vendor AI agents and systems under one unified ecosystem.

Here, we cover the basics of what AI Agent interoperability is, how it differs from integration and orchestration, the top protocols driving it, potential risks, and best practices for successful AI interoperability.

What Is AI Interoperability?

AI interoperability is the ability for different AI Agents, models, and systems to autonomously collaborate and take collective action to achieve desired outcomes, even if those AI agents are from different vendors, platforms, or domains.

The goal is to provide interoperable agentic AI orchestration without vendor lock-in: to connect AI agents from different systems, providers, and frameworks into one unified, governed system with shared language and standards. Interoperability signals the end of single-purpose AI applications and the beginning of interconnected multi-agent systems (MAS) that autonomously execute multi-step, cross-functional workflows.

In simpler terms, interoperability allows all your AI agents to seamlessly communicate with each other, hand off tasks to each other, and share context with each other – no complex custom engineering required.

AI Interoperability vs AI Orchestration vs Integration

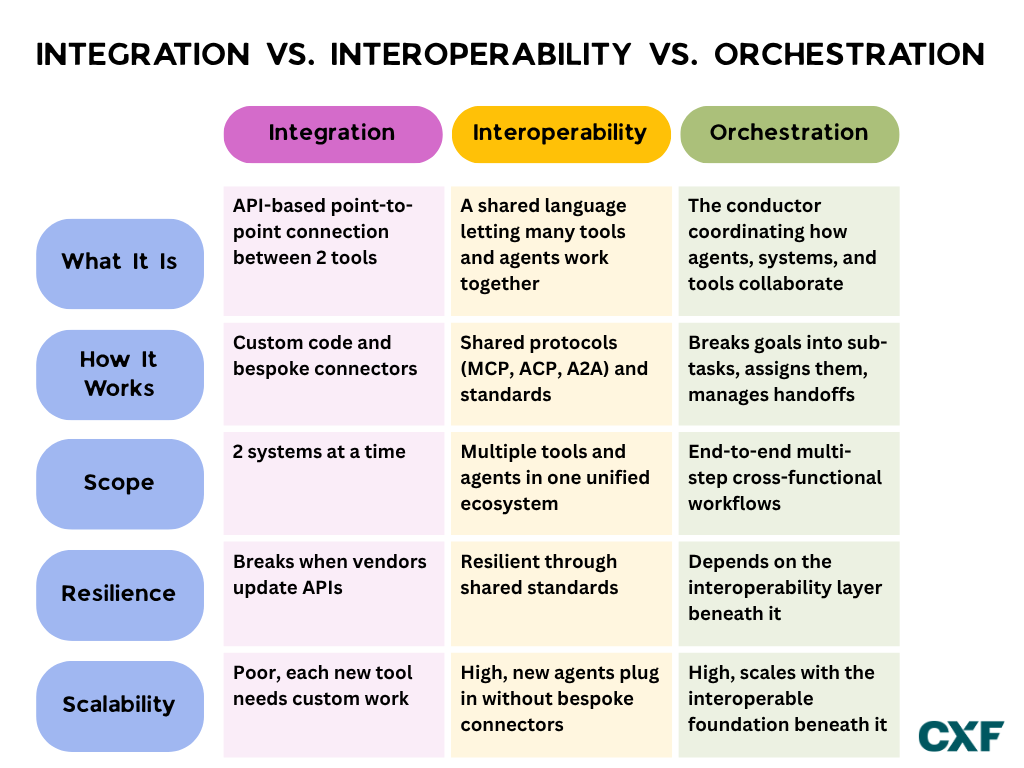

While AI agent interoperability, integrations, and orchestration work alongside each other, they’re not the same thing.

AI Integration

Integration is an API-based point-to-point connection linking 2 tools/applications, allowing them to share data or trigger specific actions. For example, if you integrate your Voice AI agent with your CRM system, the agent will be able to use existing account data or conversation history in customer conversations. The problem is that each new tool or AI agent requires custom coding and bespoke connectors - making scalability a challenge. Additionally, integrations break when vendors update APIs or make changes to their systems, creating a need for even more custom engineering.

AI Interoperability

Interoperability creates a shared language that allows multiple tools and AI agents–not just two– to communicate, share data+memory, and take collaborative action within one unified ecosystem. It uses shared protocol–like MCP, ACP, or A2A–alongside shared standards to allow for cross-vendor collaboration while maintaining resilience. Because new agents and tools don’t require bespoke connectors, interoperability provides a much higher level of scalability than simple integrations.

AI Orchestration

Orchestration is the conductor that coordinates how agents, systems, and tools work together to achieve their shared goal. Orchestration breaks down larger objectives into specific sub-tasks, assigns specific actions and tasks to specific tools or agents, and manages the data handoffs between them. AI Orchestration executes complex, multi-step, and cross-functional workflows end-to-end – all while ensuring compliance and governance.

Why AI Interoperability Matters

AI agent interoperability is essential for today’s enterprise workforce. It eliminates data and application silos, prevents single vendor lock-in, autonomously executes complicated workflows, and provides a seamless customer experience.

The idea is catching on: 75% of organizations plan to deploy multi-agent frameworks within the next 18 months, and 45% of organizations that have already scaled AI agents are actively piloting multi-agent systems instead of single-use bots.[*]

The performance gains from interoperability are inarguable: multi-agent AI systems successfully complete 70% more processes than individual AI agents, deliver 40% faster execution, and have 25% lower operating costs compared to traditional workflow processes.[*]

60% of multi-agent systems are expected to support interoperability by 2028, meaning that businesses can choose the AI agents that work best for their specific needs, regardless of provider.

Key Protocols Driving AI Interoperability

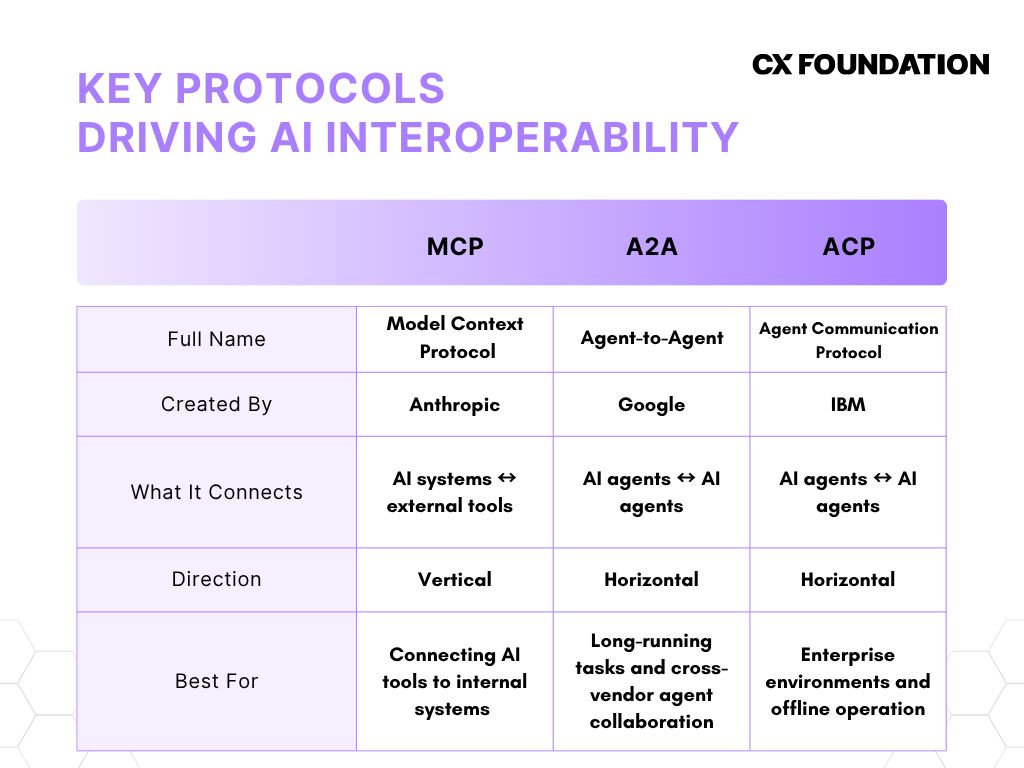

Three main protocols currently dominate the AI interoperability landscape: MCP, A2A, and A2C. Here’s a quick rundown of each:

Model Context Protocol (MCP)

Model Context Protocol (MCP) is an open-source framework from Anthropic that standardizes how AI systems, including LLMs, integrate and share data with external data sources and tools. Commonly referred to as the “USB-C for LLMs and AI agents,” MCP enables popular tools like Claude, ChatGPT, and Perplexity (to name a few) to communicate with your internal tools (think Salesforce, Slack, your CCaaS platform, etc.) Currently, MCP is the most commonly-used standard.

Agent-to-Agent (A2A)

Agent-to-agent (A2A) is a protocol from Google that allows agents from different vendors to discover each other, explore each other’s capabilities, and collaborate. In A2A, each AI agent uses a standardized “Agent Card” that essentially serves as a digital business card. The Agent Card displays each agent's skills, versioning, and authentication requirements. A2A is modality agnostic, supporting text, images, and video. A2A is best for long-running tasks, as it sends status updates .

Agent Communication Protocol (ACP)

Agent Communication Protocol (ACP) is a protocol from IBM focusing on governance, auditability, and structured agent-to-agent communication, making it ideal for enterprise environments. ACP ensures that inter-agent communication and data sharing is secure and compliant. It also supports local and offline operations and is lighter-weight than other options. Given the similarity between ACP and A2A, some developers believe the two will eventually merge. For now, that remains to be seen.

Potential Roadblocks To AI Interoperability

Given that the concept of AI interoperability is relatively new, there are some risks and roadblocks to be aware of.

Protocol Fragmentation

Widely considered to be the most serious roadblock to AI interoperability (especially at scale), protocol fragmentation between MCP, A2A, ACP, and other frameworks means that no clear “standard of communication” between AI agents has emerged. In my view, it essentially re-creates the “frankenstack” problem of disconnected third-party integrations.

This means AI agents built on one protocol have trouble interacting with AI agents built on another. Protocol fragmentation isn’t just a developer’s nightmare: it comes with serious security and compliance risks. It also creates a “walled gardens” effect, where enterprises are locked into a single protocol, essentially defeating the purpose of AI interoperability in the first place.

Legacy Infrastructure

Often, outdated legacy systems simply aren’t advanced enough to handle AI interoperability. Even if you manage to integrate them, you likely won’t be able to orchestrate a high number of AI agents – or complete a high volume of tasks – thanks to platform limitations.

The issue extends to data sharing: if AI agents can’t access data trapped in legacy systems, their performance may be limited. The agents won’t have the context needed to become truly transformational.

Security and Compliance

Unsurprisingly, AI interoperability has its fair share of security risks. Right now, there’s no “zero-trust equivalent” for cross-platform AI. This makes workflows easier to penetrate and data easier to access, potentially leading to cascading failures across the entire system.

AI interoperability can also create serious accountability and governance gaps. It’s hard to know who “owns” an error when multiple systems from multiple vendors are involved. Audit trails are more difficult to clearly decipher, data sovereignty becomes muddled, and iron-clad permissioning becomes more essential than ever.

Best Practices For AI Interoperability

While the goalposts are always moving, those successfully implementing AI interoperability follow the below best practices.

1. Design with AI Interoperability In Mind

Successfully AI interoperability relies on a strong foundation for both data and protocols. From day one, implement key open-standards protocols like MCP, A2A, and A2C, focus on modality agnosticism, and ensure underlying data is consistently labeled and traceable.

2. Take A Zero-Trust Approach

In an interoperable environment, agents constantly make requests across systems, vendors, and data sources. Every one of those requests potentially exposes a vulnerability. A zero-trust approach doesn’t assume the safety of any AI agent or system, meaning it verifies every interaction against governance and security policies before moving to the next step. Enforce least-privilege access for every agent, build modular and containerized, and narrowly score credentials.

3. Focus on Governance and Observability

Interoperable AI agents do a lot, across a lot of different systems, in a short amount of time – but you can’t govern what you can’t see. While AI agents operate autonomously, they still require an experience layer that interfaces between humans and AI agents. This layer should emphasize explainability, showing the agent's status, reasoning, and data sources. This makes it easier for human agents to audit decision and trust outcomes. Paired with real-time monitoring, this approach lets organizations detect unusual patterns, orchestration drift, and compromised outputs before they cause cascading failures.

Treat agent permissions how you treat human employee access: defined roles, regular reviews, and clear audit trails. The goal is a system where you can answer, at any moment, exactly which agents have access to what, why, and who approved it.

Finally, deploy an overarching orchestration layer that coordinates tasks, manages dependencies, and handles agent-to-agent communication. Pair this layer with advanced telemetry and comprehensive observability.